Save

In this post, I will showcase a quick example of how to use an AI as Dall-E as a tool in the ideation process.

The object we want to design is an outdoor baby carriage for hiking.

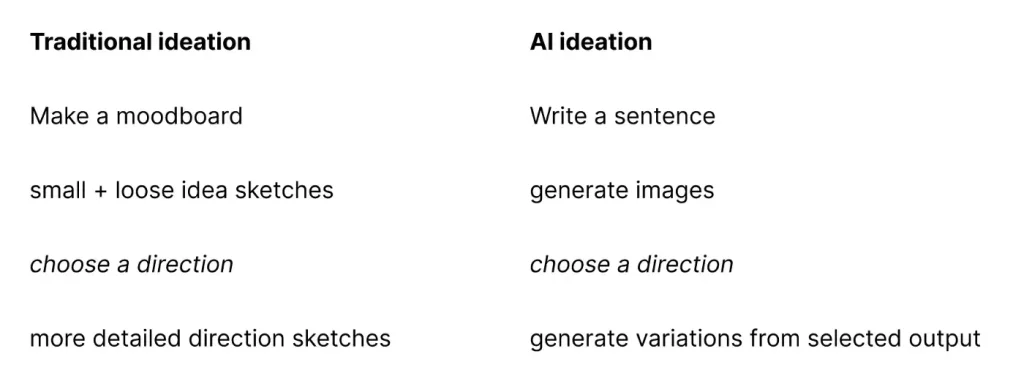

A simplified version of an ideation process

Example of Traditional ideation

As a designer, you might create a moodboard to help you communicate a direction for yourself or others. Followed by drawing some quick ideation sketches. Finally, you then highlight your best idea with a more refined sketch.

(Apologies for the quality of my example sketches. Since I have transitioned to UX I haven’t done many product drawings.)

Moodboard

Idea sketches

Direction sketch

Dall-E examples

Below you will see how I try different variations to point the AI in the direction I would like to explore.

The prompt sentence used is written below the generated images.

Exploring a direction with AI

Similar to traditional ideation when working with AI you can refine a direction. With Dall-E you can create variations from a selected output.

Above is the selected output and below are the variants generated from it.

Dall-E with an image prompt

Besides text inputs, Dall-E can also take an image combined with a sentence as input. It looks like this:

Use outputs as inputs

In my opinion, AI is fascinating and inspiring — but very hard to stir in the right direction. So for now I would not use AI output as final output, but instead, combine them with the traditional way and include the most interesting AI outputs in front of me when beginning to draw.

(I however do believe the AI beat me in this round).

Designer interested in how improvements in tech will change how the creative process.

- The author gives a brief example of how to use Dall-E, an AI system that can produce art and realistic visuals from a description given in plain language, as a tool in the brainstorming process.

- According to the author, the process of AI ideation is as simple as that:

- Write a sentence.

- Go through generated visuals.

- Choose a direction to get the most accurate realization of your idea.

- Receive generated variations from the selected output.

- Traditional ideation process versus AI ideation process – the article covers how to combine them to get the best result in the most efficient way.