Save

We designers continuously refine our design process and try different methodologies. We work on a problem, research, analyze findings, develop solutions, take feedback, and iterate. A lot has been discussed on how each of these steps can be improved or different methods associated with it, but we often forget to emphasize how important it is to stay neutral and be patient during the whole process.

Biases often creep into our design decisions. Jumping to a conclusion, taking a shortcut, letting your personal experience influence the finding, a gap in communication, and more can easily lead you in the wrong direction. It is even harder to stay on course when you work as a part of a team. Everyone has their own view, which may or may not align with each other. Although multiple views enrich the process, the chances of biased opinions manifesting in our design decisions multiply over the course of our design process.

As designers, we should avoid such biases and understand the importance of staying neutral while evaluating everyone’s opinion. Remember, acknowledging the problem is the first step in fixing the problem.

1. Confirmation bias

Confirmation bias comes from direct beliefs or theories. Individuals tend to look for evidence to back their existing beliefs while ignoring the flip side. Once they have formed an opinion, they embrace the information that supports it and ignore or don’t weigh the opinions that are against it. This is motivated by wishful thinking.

For example, say you come across a review of a product that you like. The review discusses the pros and cons of that product. You will focus on the pros more than the cons and may not consider that the product might not be the best for you.

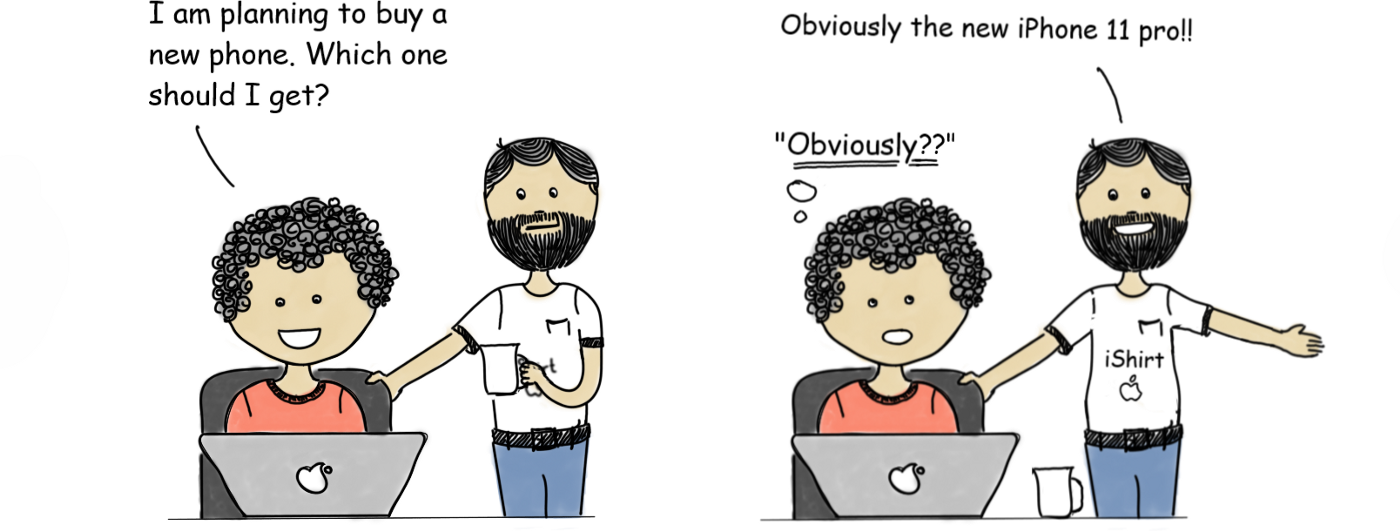

Confirmation bias is strong in an iPhone vs Android phone discussion. While each side downplays the benefits of the other phone, they overvalue the benefits of the phone they consider is better. Illustrations: Abhishek Umrao

More often than we realize during our design process, we stick to beliefs based on initial research, which can drive our project into a different direction altogether. And once you have invested your time and energy in something, going back and reconsidering becomes even more difficult. We hold that belief so close to our heart that we start ignoring the facts against it, making that belief even stronger (the backfire effect).

How do we avoid confirmation bias?

- At each and every stage you should question your opinions. Discuss your thoughts with others.

- Surround yourself with a diverse group of people and listen to their dissenting views. Most importantly be neutral and weigh each point equally.

- Actively direct your attention rather than letting existing beliefs direct it.

Related biases: The endowment effect, anchoring/focalism, backfire effect, belief bias, conservatism, choice supportive bias.

2. Framing bias

Framing is the context in which choices are presented. Framing bias occurs when people make a decision based on the framing of choices.

For example, subjects in an experiment were asked whether they would opt for surgery; some were told the survival rate is 90%, while others were told that the mortality rate is 10%. The first framing increased acceptance, even though the situation was no different (source: Thinking, Fast and Slow by Daniel Kahneman).

Relatable? 75% fat-free definitely sounds safer than having a burger with 25% fat in it. It is a common marketing practice to reframe statements to be more appealing to consumers.

A more relevant example consider these two statements:

- “80% of the users were able to find the new Bookmark button.”

- “20% of the users weren’t able to find the new Bookmark button.”

In the first framing, it looks like everything is working fine, but in the second framing, redesigning the bookmark button seems like the obvious solution. (Read more here.)

How to counteract framing bias?

- Always try at least two different ways to frame decisions and see if they still represent the same thing; this will help ensure that the bias isn’t created at your end. A similar exercise should be done when you evaluate the information you receive.

- Ask for more information to avoid ambiguity. You will make better decisions when you are aware of the context. A study by Ayanna K. Thomas and Peter R. Millar suggests such situations can be minimized if the information to make an informed decision is more accessible.

- Do not jump to conclusions. Resist the urge to make decisions quickly; you have to invest time and thought to understand the context.

Related bias: ambiguity effect.

3. False consensus bias

False consensus bias occurs when we assume others hold the same beliefs, values, preferences, or findings that we do. As our own beliefs are highly accessible to us, we tend to extend them to others.

For instance, if you are in favor of abortion rights and opposed to capital punishment, then you are likely to think that most other people share these beliefs (Ross, Greene, & House, 1977).

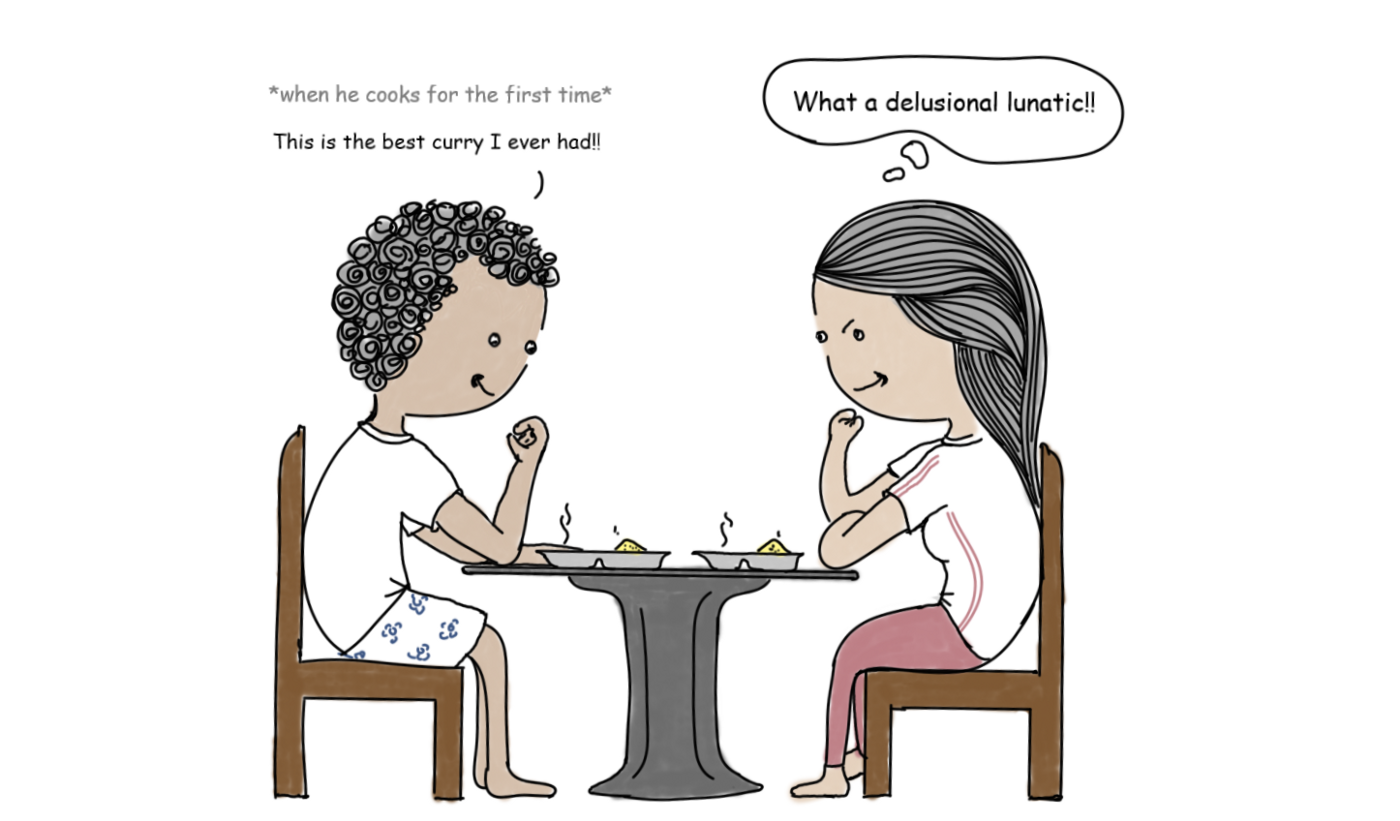

We often extend our opinions onto others. As shown above, assuming the other person would definitely like what you like is an example of false consensus bias.

The people we spend our time with do often share common beliefs and opinions. Because of this, we start to think this is true of the majority of people. Since these beliefs are always at the forefront of our minds, we are more likely to notice when other people share similar attitudes, leading us to again overestimate just how common they are.

This can occur more often than we realize while we build a new product or feature on a team. We always have to make decisions based on limited information, and this requires us to make certain assumptions.

How do we make sure that these are valid assumptions and not just based on our personal opinions or beliefs?

- List all the findings or assumptions that were made with inadequate or no research.

- Give your colleagues a walkthrough of your research, leaving no detail out when you explain them. Ask them to be critical about your findings when you do this exercise, and at each step try to articulate how you came to your conclusion.

- Do more research to validate whether your assumptions or half-baked findings hold true with a larger set of the target audience.

Related bias: projection bias.

4. Availability heuristic

Availability heuristic refers to people unknowingly giving more importance to the information they recall first or most easily. People tend to overestimate the likelihood of an event that happened recently or is talked about a lot.

A study by Karlsson, Loewenstein, and Ariely (2008) showed that people are more likely to purchase insurance to protect themselves after a natural disaster they have just experienced than they are before it happens.

In the context of design, it happens frequently that we become more sensitive to the user challenges we have heard about most recently and end up prioritizing those over other pressing challenges. This problem worsens when there are multiple stakeholders giving their input.

How to avoid availability bias:

- Gather primary research across varied sets of users or look at usage analytics to identify user challenges rather than just taking into account the opinions of stakeholders.

- Generating insights from primary research can be tricky because we can fall prey to availability heuristic here as well. For example, if the analysis is not done thoroughly, we may give importance to the most recent or most memorable interview. Design tools like personas, empathy maps, customer journey maps, etc., help us look at the research data from a broader point of view.

Related bias: attentional bias.

5. Curse of knowledge bias

This is a cognitive bias that occurs when an individual unknowingly assumes that others have the same background knowledge and understand as much about a topic or situation as they do. Ever been new on a project and found it hard to follow what everyone’s talking about? Well, you might not be at fault. The people involved in the group could be under the impression that their knowledge of the system is very obvious and you should have a decent idea about what they are talking about. This is called a curse of knowledge.

For example, when you work in an ecosystem for a long time you get familiar with its terminology or jargon. You start using it in your designs assuming that they are self-explanatory. But when you show it to users they feel confused.

This has happened with me a couple of times. To my friends, who were familiar with investing, everything they advised seemed obvious, but I was always lost. This is one reason I refrained from investing for a long time.

It’s not just limited to the scenario in this example; similar situations can arise during knowledge transfer from a product manager to a designer, a designer to a developer, or even during a peer-to-peer discussion.

How do we break this curse (of knowledge)?

- When you are involved in a system for a long time, you tend to get biased, so take a step back and think from a different perspective. Discuss your designs from different angles and don’t assume your audience knows what you’re talking about.

- Get a fresh perspective by getting in the shoes of a first-time user. Show your wireframes and prototypes to a fresh set of users before finalizing your designs.

- Leverage insights from someone who has just joined your team and actively take feedback from first-time users of your released product. Asking existing users can also help figure out the missing information in your product.

- Find out how familiar your target audience is with the jargon used in your company, industry, and product.

6. Bias blind spot

The bias blind spot is recognizing the impact of biases on others’ judgment while failing to see the same impact on one’s own judgment. Similarly, the bias blind spot refers to when you overlook the impact of your own biases. It is more common than we realize. In fact, researchers in a study found that only one adult out of 661 said that they are more biased than the average person.

Learning about cognitive biases means we have to try to be completely honest about whether our decisions are biased and consciously look at our actions and behaviors. In short, practice what you preach.

Can you guess the bias in the following example? 😉

The above happens more than often with me and my friends, they say they won’t drink anymore because of a hangover. But after a couple of days, they are up for another round of beer again.

I am a Product Designer & an occasional trekker, who loves playing and watching football // Gaming // Techology // Cars // Travel // More at abhishekumrao.com.