Save

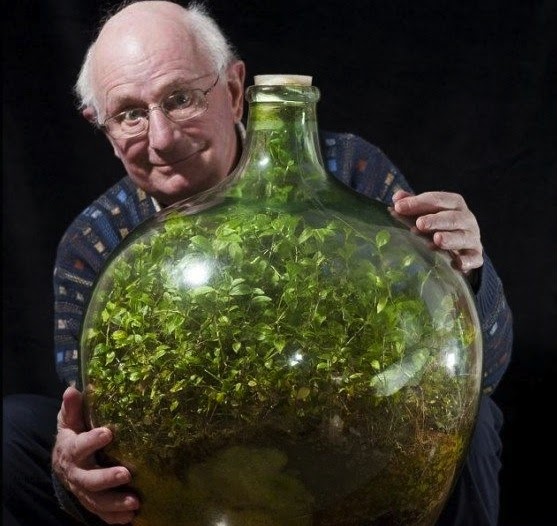

Maybe you’ve seen the meme knocking around the internet: a photo of British octogenarian David Latimer who bottled a handful of seeds in a glass carboy in 1960 and left it largely untouched for almost 50 years (uncorking it only once, in 1972, to add a little water). His 10-gallon garden created its own miniature ecosystem, and has thrived for more than half a century. [1]

In the realm of technology, closed platforms are like Latimer’s terrarium: they can be highly functional, beautiful, and awe inspiring, but they can only grow as big as their bottles. The current business landscape, however—with businesses attempting to sequence as many innovative technologies as possible, as quickly as possible, to automate business processes, workflows, tasks, and communications—requires an architecture that breaks out well beyond these glass walls.

For something like the original iPhone, a terrarium was just fine. Everything a user needed to enjoy its functionalities was baked right into the original version of iOS. Keeping the system closed ensured the quality of the apps and created a seamless overall experience, which contributed to its success, despite the fact that it didn’t have nearly as much functionality as other mobile devices at the time.

Become a member to read the whole content.

Become a memberJosh Tyson

Josh Tyson is the co-author of the first bestselling book about conversational AI, Age of Invisible Machines. He is also the Director of Creative Content at OneReach.ai and co-host of both the Invisible Machines and N9K podcasts. His writing has appeared in numerous publications over the years, including Chicago Reader, Fast Company, FLAUNT, The New York Times, Observer, SLAP, Stop Smiling, Thrasher, and Westword.