Save

The Federal City Council approached IDEO to lead a futuring workshop on reimagining Washington D.C.’s downtown in the post-COVID era. With many government employees now working remotely, the central business district’s identity needed to change. The goal of this workshop was to generate innovative ideas to sustainably bring people back and establish D.C. as a model post-COVID global city.

IDEO decided to take the 100+ stakeholders through a futuring process designed to help them envision desired futures that might feel out of reach based on their day-to-day experience. As part of this, the team wanted to create a tangible visual experience that felt both nostalgic and forward-looking. And so we looked to the retrofuturism of the View-Master.

The team knew the feeling they were after and reached out to me to bring in some AI tools. I’d collaborated with Rob, the team’s visual designer, on generated imagery before and was excited to prompt engineer together again. Making a View-Master image, of course, means creating stereoscopic images. I’d never done this before, but felt confident that GitHub or Hugging Face would hold the answer.

And, indeed, I discovered MiDaS, a depth estimation model. From there I found and forked @m5823779’s stereo image generator so that it can run on my M1 Mac (aka on the CPU instead of CUDA).

After some back and forth between me, Rob, the rest of the team, and Midjourney we had our seven base images. Converting them to 3D took only a few seconds per image after I’d installed the Python prerequisites in a virtual environment (never globally install Python stuff, you will end up reformatting your computer).

The plan from there was to get physical View-Master reels printed, but we needed them for the in-person event in only two weeks. It seemed like that might not be possible.

So, in the event that we couldn’t find a printer, I built two digital viewer versions. The first (which you can see here) works with the sadly discontinued Mattel View-Master Virtual. The second is a WebXR experience that you can see on any VR headset with a web browser.

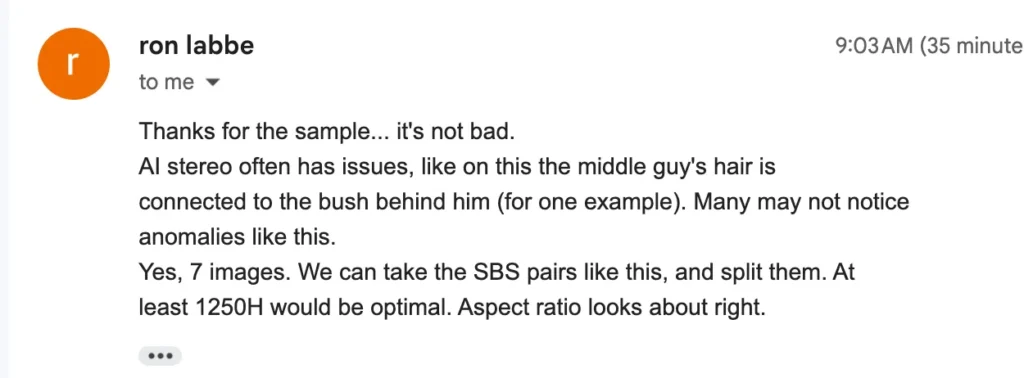

While I’m glad I built these (after all, that means I can share them with you), the magic truly happened when Evanne got in touch with Ron Labbe at Studio 3D. Ron was up for the challenge of printing on our compressed timeline and forgiving of the (hilarious) mistakes the combination of generated imagery and generated 3D-ification created.

He did, however, need a higher resolution than I’d produced. Luckily I already had a copy of Topaz Gigapixel, a really useful upscaling utility.

I found the results really moving. I’d created the images, seen them in digital 3D through a variety of viewfinders and free viewing, and have a collection of View-Master reels. Yet, seeing the film grain and holding the View-Master up to a light helped me not just see, but feel, the future of the D.C. I hope the workshop participants, and the rest of the IDEO team, felt a little of what I did. It made a future of greenspace, community, and micro-mobility seem just a little bit more possible.

Jenna Fizel

Jenna Fizel is a senior director of emerging technology at IDEO. They are inspired by the unexpected ideas that arise when translating the abstract world of information into the tangible world we can see, touch and taste. At IDEO Jenna helps teams explore design questions through building with tools like AI, XR, and digital fabrication. They believe these tools can sometimes be part of the design solution—but are always useful during the design process when used as new ways of seeing. In addition to leading IDEO’s emerging technology practice, they run an 80-person internal learning group that makes technical skills more accessible to the IDEO community, regardless of discipline or position. Prior to IDEO, they were a junior partner at an interactive design agency where they worked for museums, libraries, and corporations designing and building mixed physical/digital spatial experiences. They also co-founded a fashion technology startup and served as the CTO of a technology-focused intimate apparel company. Jenna sees software as a mode of thinking that solves problems by making systems and then seeing where they fall apart, an approach formed by their academic background in computational geometry at MIT. Their ambition is to one day own enough digital fabrication tools to craft all their furniture at home. Up next: a CNC.cn

- The article discusses how IDEO used AI tools to create retrofuturistic View-Master-style images for a futuring workshop on post-COVID D.C., with physical prints evoking visions of a greener, more connected future for the city.