Save

This article was originally published by Nielsen Norman Group at https://www.nngroup.com/articles/chatgpt-productivity/ and is reprinted by permission.

The recent introduction of ChatGPT and similar generative artificial-intelligence tools has caused much gnashing of teeth. Commentators complain that one can trick the AI into saying nasty things and that the output is often misleading.

As to the first, I say: so what. You can trick Excel to calculate things wrong by using erroneous formulas. And if you want a word processor to produce nasty text, you just type it in. The measure of a professional tool is not whether it does something wrong when you deliberately abuse it. The question is whether results are good when the tool is used as intended.

Much worse is when ChatGPT is used correctly and generates text that sounds very convincing but includes complete fabrications. Hopefully, future versions will be more accurate, but, again, I don’t think that erroneous output necessarily dooms an AI tool if it’s used correctly. Of course, if you rely on ChatGPT without checking its output, then falsehood will bite you. But if a human checks the AI-generated text, then edits and corrects it, will the results be worth the human effort?

Luckily, a new research study provides insight into exactly this question.

Research Study

Shakked Noy and Whitney Zhang from MIT recently published the findings from an empirical study of business professionals who used ChatGPT to write a variety of business documents.

Study participants were 444 experienced business professionals from a variety of fields, including marketers, grant writers, data analysts, and human-resource professionals. Each participant was assigned to write two business documents within their field. Examples include press releases, short reports, and analysis plans — documents that were reported as realistic for the type of writing these professionals engaged in as part of their work.

All participants first wrote one document the normal way, without computer aid. Half of the participants were randomly assigned to use ChatGPT when writing a second document, while the other half wrote a second document the normal way, with no AI assistance.

When considering the results reported below, we should note that most of the participants in the ChatGPT condition were using the AI tool for the first time. (30% of all participants had used ChatGPT before.) Usually, with any tool, there is a learning curve: the more users use the tool, the more efficient they become at using it. It’s great when a tool has sufficient learnability that users can use it successfully on their first attempt. But for professional use, the level of productivity that users achieve over time is often more important. In any case, the present study showed that ChatGPT has great usability for first-time users (who represented the majority of the AI group); the results may be even better for users with more experience with the tool.

Once the business documents had been written, they were graded for quality on a 1–7 scale. Each document was graded by three independent evaluators who were business professionals in the same field as the author. Of course, the evaluators were not told which documents had been written with AI help.

As an aside, I want to point out that it’s disappointingly rare for UX studies to assess the quality of the work produced with the tool that’s being studied. Output is, after all, the goal of much computer use, and the quality of this output is an essential element in judging the user interface. As shown in the present study, one common way to measure quality is by having the work graded by independent evaluators.

Results: Faster Work, Better Results

There’s often a conflict between working faster and getting good results (a phenomenon known in cognitive psychology as the speed–accuracy tradeoff). However, in this study, the business professionals who used ChatGPT were faster at producing their deliverables and the rated quality of these deliverables was also higher.

The first round, in which documents were produced without AI help, produced the same results in both groups, confirming that the assignment of participants to study conditions was indeed random. In other words, it was not the case that participants in one group were somehow more talented or skilled than the participants in the other group. Thus, we can feel confident that the differences measured for the second round of writing were indeed caused by the use of ChatGPT.

In the second round, the business professionals using ChatGPT produced their deliverable in 17 minutes on average, whereas the professionals who wrote their document without AI support spent 27 minutes. Thus, without AI support, a professional would produce 480/27 = 17.7 documents in a regular 8-hour (480-minute) workday, whereas with AI support that number would increase to 480/17= 28.3. This is a productivity improvement of 59% = (28.3-17.7)/17.7. In other words, ChatGPT users would be able to write 59% more documents in a working day than people who do not use ChatGPT — at least if all their writing involved only documents similar to the ones in this study. This difference corresponds to an effect size of 0.83 standard deviations, which is considered large for research findings.

Generating more output is not helpful if that output is of low quality. However, according to the independent graders, this was not the case. (Remember that the graders did not know which authors had received help from ChatGPT.) The average graded quality of the documents, on a 1–7 scale, was much better when the authors had ChatGPT assistance: 4.5 (with AI) versus 3.8 (without AI). The effect size for quality was 0.45 standard deviations, which is on the border between a small and a medium effect for research findings. (We can’t compute a percentage increase, since a 1–7 rating scale is an interval measure and not a ratio measure. But a 0.7 lift is certainly good on a 7-point scale.)

Thus, the biggest effect was in increased productivity, but there was also a nice effect in increased quality. Both differences were highly statistically significant (p=0.000 for both metrics). Remember that these improvements were recorded even though most participants had no prior experience with ChatCPT. Long-term improvements will likely be much bigger, as users discover better ways of using the tool and adapt their work styles accordingly. (Something called the task–artifact cycle, where the largest benefits from a new tool come from adapting the way you work to the new capabilities offered by the tool. This is in contrast to automating existing business processes without change, which is often suboptimal.)

Why Better Performance with ChatGPT

So much for the quantitative results. As is often the case in UX, it is more interesting to consider the “why” than the “what.” Why did business professionals perform better when writing documents with the help of ChatGPT? The present research is not completely satisfying in answering this question, possibly because the scientists were not UX professionals, but rather economists interested in productivity research. However, some interesting insights did emerge from their research.

First, it seems that the use of ChatGPT reduced skill inequalities. While in the control group, which did not use AI, participants’ scores for the two tasks were fairly well correlated at 0.49 (meaning that people who performed well on the first task tended to perform well on the second, and people who performed poorly on the first also did so on the second), in the AI-assisted group, the correlation between the performance in the two tasks was significantly lower at only 0.25. This lower correlation was primarily due to the fact that users who got lower scores in their first task were helped more by ChatGPT than users who did well in their first task.

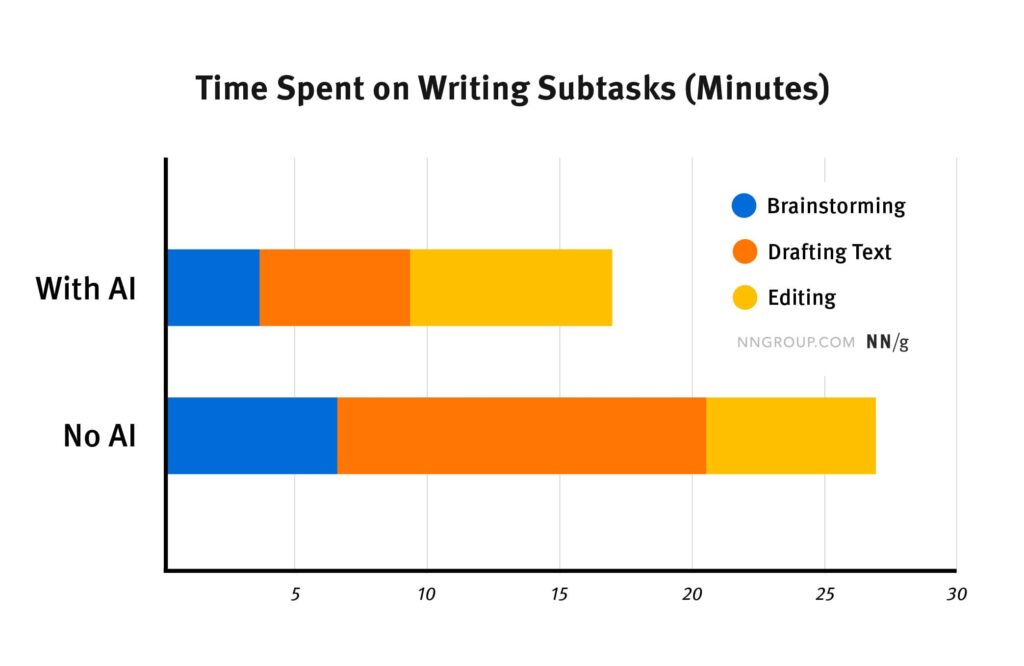

Second, professionals were asked to report how they had allocated their time across three different phases of the writing process: brainstorming, writing a rough draft, and polishing this draft. Their responses suggested that using ChatGPT changed the way users had spent their time.

In the first round (with no AI assistance), business professionals spent about 25% of their time brainstorming, 50% writing a rough draft, and 25% editing this draft to produce the final, polished deliverable. When using ChatGPT, participants possibly spent a little less time brainstorming (though the difference is within the margin of error, and so cannot be relied on). The time spent generating rough drafts was more than cut in half, since most of this workload was offloaded onto ChatGPT. And, interestingly, the time spent polishing the draft doubled.

One step cut in half and one step doubled: you might think that we’re even. No: since the rough draft time was originally twice as long as the editing time, the factor-of-two difference results in a bigger absolute number for rough drafting than for editing. This explains the overall reduction in task time when using ChatGPT: much more time was saved in drafting than was expended on additional editing. Conversely, it’s possible that the additional time spent editing the final deliverable contributed to the higher-rated quality of the AI-assisted documents.

Thus, the productivity and quality improvements are likely due to a switch in the business professionals’ time allocation: less time spent on cranking out initial draft text and more time spent polishing the final result. If this analysis holds up under more detailed qualitative research, it seems that ChatGPT’s main contribution is to save users substantial time on the production of rough text.

Barchart showing the average time in minutes spent on the three stages of writing a document: [1] deciding what to do (called “brainstorming” by the researchers), [2] generating the raw text for the first draft, and [3] editing this draft to produce the final polished deliverable. The top bar shows the average times spent by users who employed ChatGPT, whereas the bottom bar shows the average times for users who wrote their document the normal way, without AI assistance. The difference between the two “brainstorming” time estimates is not statistically significant.

This chart is based on recalculated data from Noy and Zhang (2023).

Limitations

Noy and Zhang should be applauded for giving us empirical data about real business professionals using ChatGPT for realistic business tasks. This is a huge improvement over the morass of rants and personal opinions that have cluttered up social media since the launch of ChatCPT in November 2022. That said, the present study does have some weaknesses — but that’s true of all research since nothing would ever get done if we had to wait for the perfect study.

The authors studied a range of mid-level business professionals producing realistic, but fairly short documents. (Remember that the time to write a document was 27 minutes without AI support.) It’s great to study a range of professions, which vastly increases the generalizability of the findings compared to limiting a study to a single type of users. Still, to fully understand the impact of AI on business professionals, we would want data from an even wider range of jobs, both across domains and across levels, including senior professionals, such as executives, senior engineers, physicians, and such. We also need larger-scoped tasks that would require hours or days to complete. (Obviously, for budget reasons, early research cannot use study participants who need to spend multiple days in the lab for a task. But such studies have been done in other realms, and need to be done here as well.)

As already mentioned, the present research has too little qualitative insight into the details of users’ behaviors and why they did what they did. Furthermore, the estimates of how users divided their times between different stages of document generation were based on self-reported numbers. We know that self-reported data is weak in UX studies, so a more careful way of estimating these numbers would be preferred in future research.

Conclusion

Current releases of generative AI such as ChatGPT are notorious for sometimes producing biased or false output. But the synergy of AI and skilled humans can surpass both. Both when debating AI and when considering whether and how to introduce AI tools in business, we should strongly consider ways of making AI and human business professionals work together. It’s not a question of AI replacing skilled humans, because AI can serve as a tool for augmenting the human intellect, along the lines originally envisioned by Doug Engelbart as his goal for advanced user interfaces.

Reference

Shakked Noy and Whitney Zhang (2023): Experimental Evidence on the Productivity Effects of Generative Artificial Intelligence, MIT Economics Department working paper. Retrieved March 13, 2023, from https://economics.mit.edu/sites/default/files/inline-files/Noy_Zhang_1_0.pdf

Jakob Nielsen

Jakob Nielsen, Ph.D., is a cofounder of Nielsen Norman Group and the founder of the discount usability movement for fast and cheap iterative design, including the heuristic evaluation and the 10 usability heuristics. He was named “the king of usability” by Internet Magazine, “the guru of Web page usability" by The New York Times, and “the next best thing to a true time machine” by USA Today. Nielsen formulated the eponymous Jakob’s Law of the Internet User Experience. Prior to starting NN/g, Dr. Nielsen was a Sun Microsystems Distinguished Engineer and a Member of Research Staff at Bell Communications Research, the branch of Bell Labs owned by the Regional Bell Operating Companies. He is the author of 8 books, including Designing Web Usability: The Practice of Simplicity, Usability Engineering, and Multimedia and Hypertext: The Internet and Beyond. Dr. Nielsen holds 79 United States patents, mainly on ways of making the Internet easier to use. Full bio: https://www.nngroup.com/

- The article discusses the accuracy and reliability concerns raised by the introduction of ChatGPT and similar AI tools based on a recent study by Shakked Noy and Whitney Zhang from MIT.

- While there is a need to ensure the accuracy and reliability of AI-generated output, the study highlights the potential of these tools for enhancing human productivity and quality in professional settings.