Save

Presented at DCUX 2019 as “Fly On The Wall” talk by Lia D. McCabe

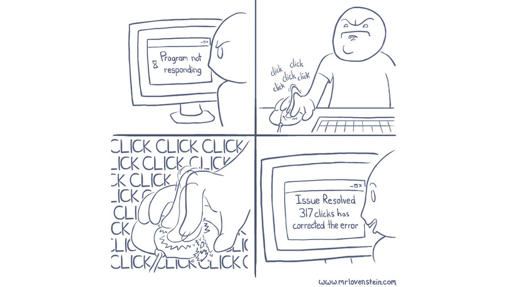

If only this worked!

Tip 1: Use a naturalistic approach

By using a naturalistic approach or ‘in-the-wild’ observation, we can gain qualitative and even quantitative outputs that are more reliable and more likely to encounter true-to-life user frustrations than in a structured setting.

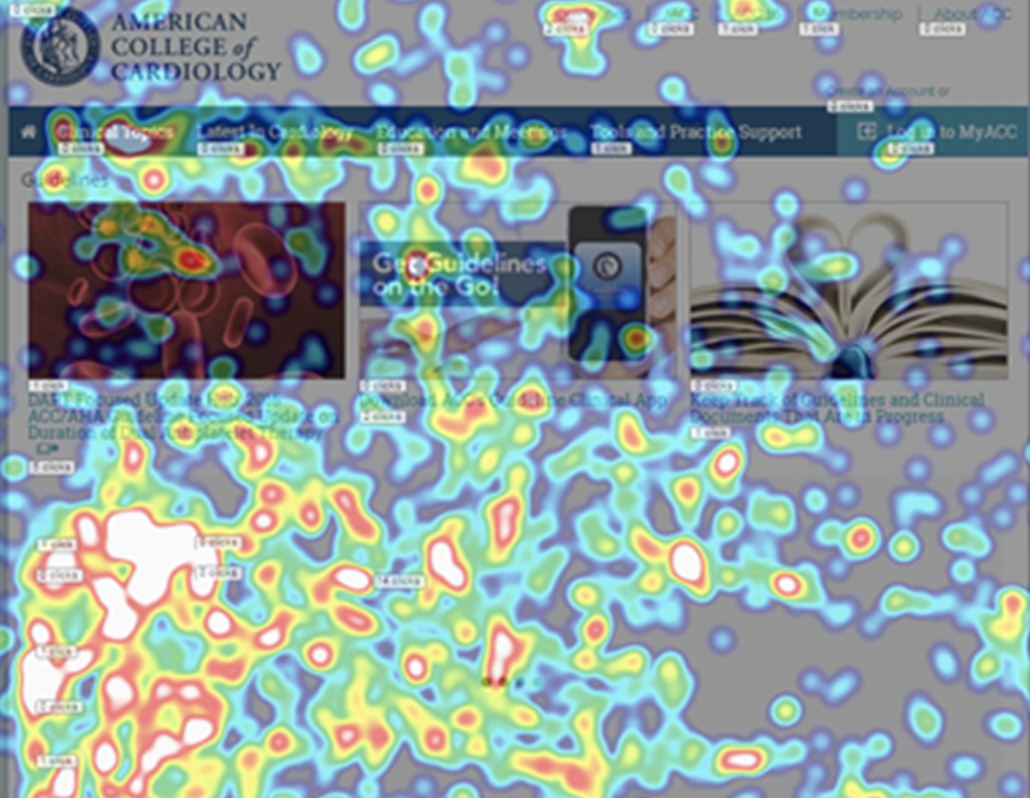

A great approach is using session-replay and heatmaps as a tool for unmoderated remote testing. For instance, at my company, we wanted to know how we could improve our medical Guidelines page to make documents and related materials easier to find. To accomplish this, we utilized a naturalistic approach through observation of recorded user sessions and heat mapping on the Guidelines page. Now there are a lot of great tools out there but there’s one, in particular, I’d recommend, which leads me to tip #2.

Tip 2: Utilize the right tools

It’s important to have tools that work with us…not against us, and in my experience Mouseflow is that tool.

Mouseflow allows us to record-and-replay or live-watch user behavior to identify problems they may encounter. Because it’s just a line of code that’s placed on any website, there is no recruitment or scheduling necessary. You can turn this code on and off at any time and can adjust your sample size at any point. This makes usability testing quick, easy, and flexible. Session-replays let you see exactly what users are doing on the screen while the heatmaps track clicks, mouse movements, scrolling, and other data points to get to the bottom of your research questions. This tool allowed us to gain many actionable insights for our redesign.

Heatmapping session utilizing Mouseflow tool (www.mouseflow.com). The white/orange indicates high-level of user activity in the form of clicks.

For instance, when reviewing the video replays on our Guidelines page, we observed that users were struggling to find what they were looking for. This was indicated by users continuously scrolling and clicking the ‘more’ button until ultimately abandoning the page. We also observed users typing in various versions of similar text when seeking related content, which the search was not returning. Some of these sessions lasted as long as 20 minutes. We were able to infer that it was not clear to users what text was a clickable link vs. a title or header, which led to frustration in the form of ‘rage-clicks’.

We also noted that users typically were not interested in or did not notice the triptychs at the top of the page as indicated by the low level of heatmap activity in scrolling, attention, and click categories. This informed our research question and led us to ask follow up questions such as: how can we make the search and browse functionality more efficient? Is the triptychs valuable to users or should we consider removing it? And so this leads to my third and final tip.

Tip 3: Hypothesize & Validate

Considering this data, we formed a new design hypothesis: If we remove the triptychs and pull the content of the guidelines to a landing page in a more easily digestible format, then users would be more likely to find what they need and become less frustrated.

With the knowledge gained through the naturalistic approach, we redesigned the Guidelines page and ran a second Mouseflow test. This time, heatmaps indicated users were often finding what they were looking for right away, typically clicking on pdfs or the latest Guidelines. Sessions were shorter and there were no rage clicks.

In summary, qualitative research can generate lots of ideas for product improvement as it reveals possibilities that aren’t found in quantitative research. So, if you’re short on time and resources, give the Fly-on-the-wall method a chance.

Lia is a User Experience Researcher at the American College of Cardiology, where she leads the creation of effective user experiences for cardiologists and patients worldwide. She values community volunteerism and has previously served as a board member for UXDC. Tweet her @LiaDMcCabe.