Save

The television series Black Mirror is an example of technological design fictions in popular culture, exploring a multiverse with a dystopian bent.

Post-pandemic, one concern is a strengthened association between potential surveillance online and chilling effects. Chilling effects propose that people modify their online behavior in response to potential surveillance (ex. voice recordings, workplace monitoring, cameras, phone spyware, etc.).

Chilling effect: Self-censorship due to online surveillance.

For some, privacy is an afterthought. After all, all our data is being collected all the time anyways and I have nothing to hide, so what’s the harm? Who cares about this when there aren’t any costs beyond a few creepily specific targeted ads? Why worry about something before it happens?

Well, harm affects others differently. It’s been proposed that there is a greater chilling effect on women and younger internet users (source). Expression and freedom of speech have long been fundamental democratic ideals.

The younger the participant, the greater the chilling effect.

— Jon Penney

Though it’s commonly believed that young people do not care about privacy, as they utilize social media and other forms of digital self-expression with greater gusto, it’s more accurate perhaps to say that young people simply navigate internet privacy differently than adults.

Many are digital natives — they’re savvy and cynical.

As our physical and digital lives intertwine, another cautionary note is the chilling effects associated with ‘sousveillance’: a form of surveillance that is closer to someone snapping a video without our consent, sharing our location through social media, or even being near a device that is constantly pinging a cell tower rather than a traditional overhead security system.

The idea of ‘opt-out’ used to be enough but the real ‘opt-out’ now must include those in our social circle (a difficult task indeed).

Systemic privacy is more difficult than individualist privacy.

How might designers combat this descent into ‘noise, chaos, and colors’?

To start, I recall Calm Technology, a term first coined by PARC Researchers Mark Weiser and John Seely Brown, which proposes that tech ought to ‘amplify the best of technology and the best of humanity’.

Humans should not act like machines.

Machines should not act like humans.

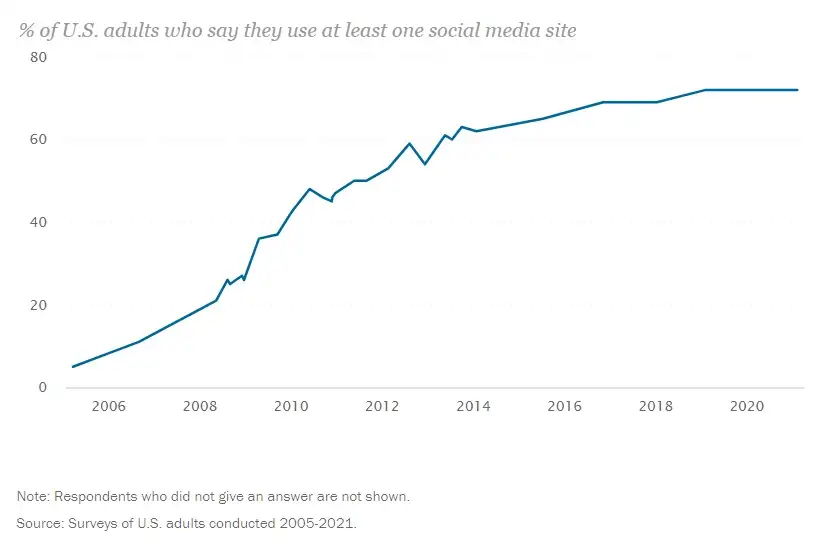

Yet the above reality is unlikely. Tools pervade and influence our behavior. Americans spend increasing hours online and increasingly on social media.

No realm of life is untouched: from parenting, relationships, and school to more difficult subjects like cyberbullying, violent crime, and harassment. What’s online often leaks into the real world.

One definition of ethics is ‘virtue ethics’: doing what’s right for your own sake. Rather than calculate utilities, analyze pleasure (epicureanism) or simply endure (stoicism), it proposes a lifelong pursuit of character building. Beyond the absence of darkness, it is the presence of ‘light’ (goodness).

In addition to mitigating potential harms, this definition proposes that there is an ethical responsibility to contribute toward the promotion of the greater public good. As a designer, this may be seen as a higher pursuit than a paycheck.

When making a design decision, consider whether what you’re building contributes toward greater:

- Human agency

- Equity

- Inclusion

Every decision is a crossroads: either one contributing toward greater privacy, equity, and agency or one that fights against it in big or small ways. There is no neutral.

The design future in our current trajectory isn’t one that I feel comfortable with. It’s not one that I feel contributes toward more freedom, rather it’s one where shame culture and cancel culture are more pervasive. It’s reactive, angry, and envious, a culture that feeds the worst side of human nature; especially detrimental for youth who are still exploring identity, learning anger management, and self-discipline.

Key issues

Let’s dig deeper. Here are other notes to consider in the potential metaverse:

- As data governance questions persist in new places, consider what assumptions are being made when external guidance is used for local contexts.

- Widespread dissemination of digital tech leads to digital town halls (rapid places of communication/chaotic change).

- Scarcity of opportunities for young people (tension between youth vs. those currently in power; different visions of the future).

Data protection laws are limited

- Not just about opting in which relies on consent. It’s easy to click yes (consent in an individual).

- There is a lack of systemic counter against the systemic accumulation of data.

Access to online information (where our every move is recorded) unveils huge swaths of information that might disturb the better angels of our nature. Examples are powerful. When the online world becomes increasingly noisy, what’s gory, shocking, or disturbing is rewarded — perverse incentives for online behavior.

Consider this: when I was a kid, I got to be a stupid kid without too many people watching. If I were a kid today, the capabilities of modern devices allow my stupidity to be broadcasted and associated with my name for a very long time. Is the risk worth the potential harm?

Conclusion

Slow step-by-step change is better and more sustainable and allows us to test new things with a minimum of difficult disruption in society.

— Jimmy Wales, on Gradualism

If there’s any takeaway, remember that laws are limited. People tend to revert to the easiest possible default decision when overwhelmed with information and decisions (as consent docs/popups tend to be due to legal jargon).

The right amount of technology is usually the ‘minimum needed to solve the problem’. The right amount of design is the minimum needed to solve the problem.

As a designer, my suggestion is simple: take time to stop working and look around you. The world is diverse and bigger than your company. The internet is bigger than any one country. Design ought to fulfill a need, technology remains a tool and it should not drive us toward a decline in human agency due to feeding our lowest base instincts. Remember those who face negative externalities from the lifestyle you choose and the things you design.

Interaction Designer at Google. Seattle-based designer responsible for data marketplace dynamics. Illustrator and green tea drinker. Writing on design, creativity, and technology.

- Nowadays, people tend to change their online behavior because of the constant feeling of surveillance – this phenomenon is called the “chilling effect”. It affects all users, however, younger Internet users are more aware of their privacy navigation.

- Nothing is private now – every realm of human life stays on the Internet forever.

- Under such circumstances, the design’s future trajectory is not something the author is comfortable with. She advises always considering whether what you’re building helps increase sustainability while making design decisions.

- Technology should remain a tool, design should satisfy a need, and neither should cause a decrease in human agency by pandering to our primary instincts. According to the author, negative drawbacks are something a designer should always keep in mind.