Save

In 2015 I started working on a Capstone Studio Course at the MS Strategic Design and Management at Parsons. Instead of extending further on the “design thinking” approach as practiced in a preceding Design Research studio, I wanted to create a pathway to implementation, where we move from theory to praxis, get a concept to life, “make it happen”.

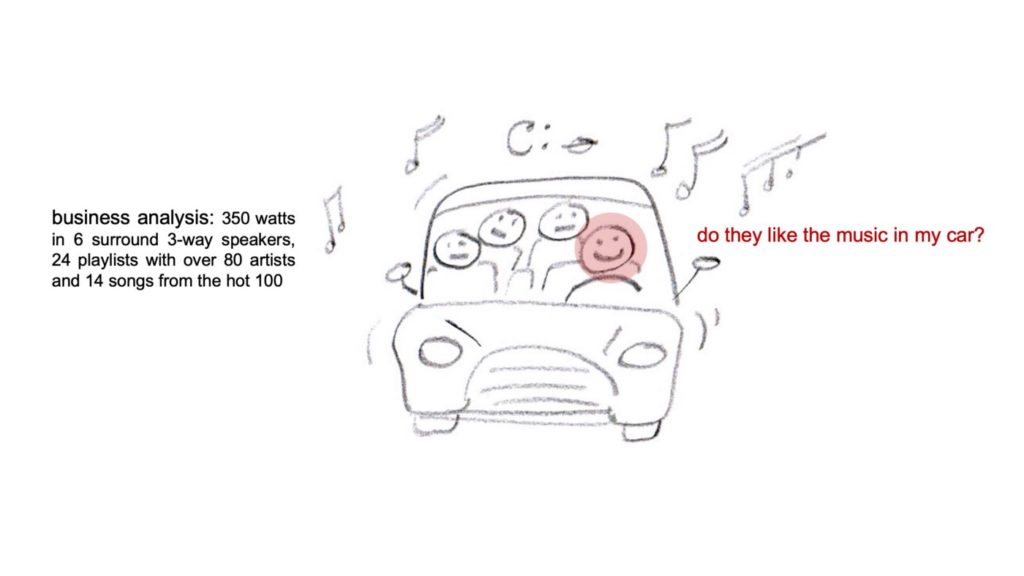

This pathway follows a straightforward process to develop a strategic plan, I was not reinventing the wheel. Coming from Strategic Design, there are some nuances though: for example, instead of benchmarking, we evaluate the landscape in which a new project will be integrated, examine how it can fit in and stand out. We also do this in an analog, co-related area to change perspective and see our challenges with different eyes. All this evolves around human considerations — numbers only tell a part of the story. They might say how successful something is, but not why.

A key moment for myself had been a workshop I held for executives from Shenzhen where one of the participants, who visibly struggled with our approaches, posed a clear question. He asked: “tell us how much before design thinking, and how much after design thinking”.

It is hard for executives to base their decisions on insights that appear as speculative at times, and they love numbers, good numbers. When working on Design Research, where we unveil opportunities in an area that is in need of innovation, we have a powerful tool, the heart of the design process: observations. If decision-makers see with their own eyes, in video registrations of our observations, how people struggle for instance with the interface of their platform, if they hear them swearing because it does not respond to their expectations and does not keep the promise, they understand. I experienced very quiet, reflective C-Suites after presenting such observations. At the beginning of my career, leading a team at IDEO, I was showing an observation video with such real-life scenarios to the executive board of Deutsche Telekom. It had a strong impact, they decided to completely reevaluate their service offering, design an interaction design language across all sections, and develop for the first time in the company’s history own products that incorporate the new interaction design. In a very short period of time, we developed a usability strategy and brought 24 products to market, the T-concept product line.

Today we are working on projects for social innovation in Strategic Design, the spectrum of our work evolved dramatically. The power of observations is valuable also in these new fields of applications, very much so. Most concepts however remain in the drawers. If a concept is considered for implementation, the questions about numbers get even more tricky: how can we make the impact of a project predictable and calculable? How can we measure the impact, when testing functions of a new project in the development phase?

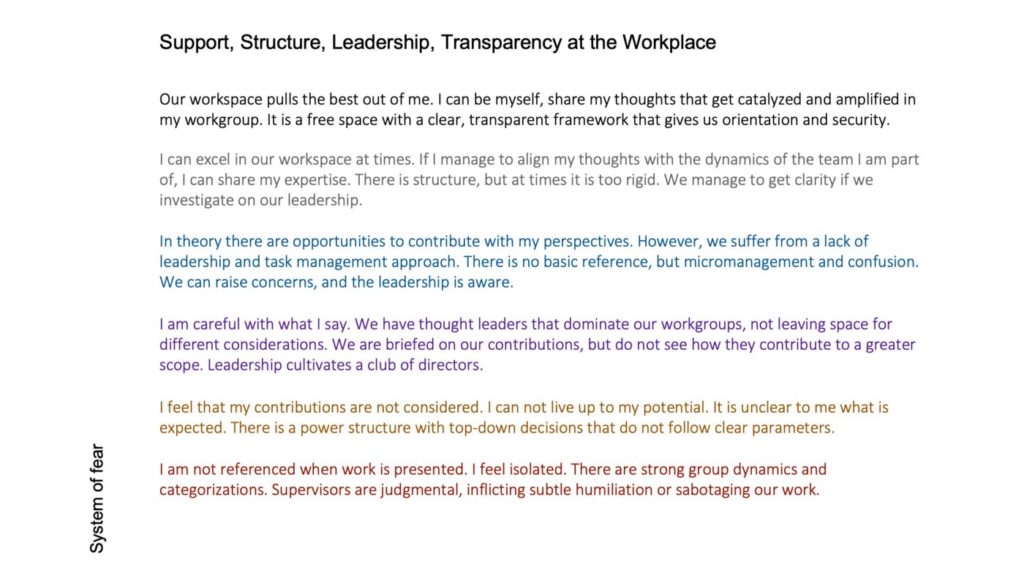

Based on my experiences in practice, I further evolved with my students the “development matrix”. In this matrix, we assign qualities to the desired outcomes and perceptions of the people we are addressing. We also define what a worst-case scenario could be. In a way, we define what exactly is “good” and what is “bad”. It is a sophisticated task to put that in words, but I learned that if I am not able to do that in front of my clients if I cannot clearly formulate what the desired human perception of a product or service should be, I cannot survive against the number people in the room.

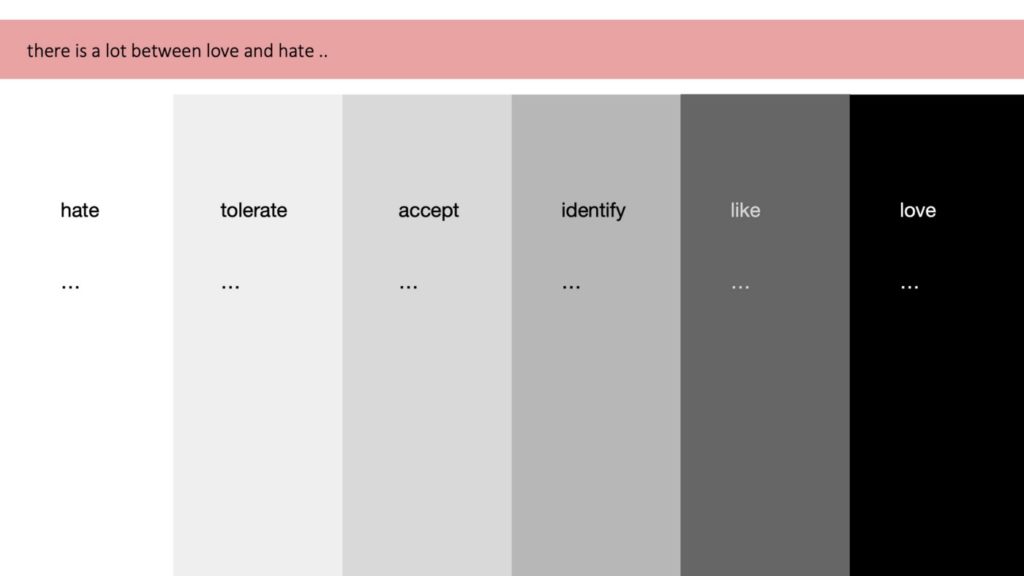

I also had to learn that I need to communicate what lies in-between “good” and “bad”, as despite all polarizations in this period of time there is a whole spectrum in-between. We need to define those shades of gray, to better understand where we are, as our aim should be to improve. Also for the number of people, it is not either zero or a million.

Therefore, in a development matrix, we define qualities that we assign to the various functions of a project, and we formulate what lies in-between. In a way, we move away from grading with numbers.

This development matrix is an integrative part of the roadmap and evolves with prototyping and testing activities, or risk analysis for anything that is too big to be tested, such as social innovation. In this context, we need to design when and how we can evaluate where we are in our spectrum between “good” and “bad”. We can only find out with people, and we need to document, similar as in the observations, how a function is perceived. Our aim is to convince decision-makers that there is room for improvement, that we need attention to a certain part of a project. If we manage to do so, it is our turn, the hour of the designer, to come up with numbers. We might need more time, money, resources, or people to move towards “as good as possible”, to satisfy the purpose. For some projects that could lead to selling it maybe 560,000 times, after design thinking. For others, it could be a contribution to a change process in a larger system, that we need to monitor in order to find out if it is heading in the desired direction.

I designed a modular framework “how to develop a strategic plan” of which the development matrix is a part. Here below are generic guidelines on the development matrix.

Development Matrix

What are the performance expectations?

Context

In Design Research you defined values to develop a culture code for your project. In the previous modules, you defined key functions to uphold those values. Now you will define a spectrum of possible outcomes to estimate and assess the performance of those key functions in the Development Matrix. It follows the Prototyping and Testing phase so you can apply the learnings of a few functions to a range of functions that are crucial to the project.

A Development Matrix is NOT a business analysis. It is not a reflection of how you are doing. Take the perspective of the user/service receiver/ people that are impacted by your project. You are moving from what the concept does to how it delivers. The matrix shows the assigned qualities and expectations on how functions deliver and ways to assess. It is a tool to evaluate performance.

Approach

Define a spectrum of qualities to evaluate the performance of key functions.

Define values and expectations, not numbers. You design tools to assess how components of both the business development and the project are perceived.

Create a Table that shows the parameters you apply to assess the delivery and all impacts a project, responding to expectations that derive from research and testing insights

Design tools to assess the perception and performance of key functions of your project.

What are functional expectations? What are emotional perceptions? What are real needs?

Create a spectrum of outcomes that includes several steps between best- and worst-case scenarios.

Show and explain in detail how you plan to assess these qualities or performance objectives.

Rather than making decisions, work on the expectations and possible improvements. Always show a range of opportunities and engage all stakeholders in a decision-making process.

Consider…

Within the spectrum of possible perceptions, what is still tolerable?

What are values and qualities that cannot be compromised?

At what point are improvements, further investments and development needed?

As for the business and ROI, what are performance objectives?

How do project qualities respond to potential threats?

How do project strengths reflect on the individualized opportunities?

How are diversification efforts accepted?

How would you further define the qualities assigned to each function?

How would you extend the spectrum between threat and opportunity, bad and good?

How do those qualities relate to the purpose of the project as defined in the concept pitch?

Do they respond to the needs that were evidenced in the Landscape Analysis?

Is there connection to the expectations and frustrations experienced by people that tested certain functions of the project?

Christian Schneider

Innovation Design Strategist with international experience in product, service, and process innovation, organizational design and change management; former program leader at IDEO, design manager at Studio De Lucchi; Consultant and executive coach; Clients include Ariston, Deutsche Telekom, Deutsche Bank, European Technology Fund, Ferrari, Fraunhofer Institut, FSB, Indesit, Mauser, Siemens.

Professor at universities in Europe and North America; designed bachelor’s and master’s programs in strategic design and management, taught at MBA, MS, and MfA programs, led faculty to teach courses for project based learning, created new formats for online studio courses.

The author presents his approach to planning and progress evaluation in project development from a strategic design perspective. Some key pieces of advice:

- Don’t underestimate the power of observation during the research stage, even if it’s hard to convey its value to stakeholders.

- Building a “development matrix” might help to define desired outcomes and the worst-case scenario. A “development matrix”:

-

- helps to define a spectrum of possible outcomes to assess how key functions performed

- makes it easier to improve the product at different stages and take into consideration both functional and emotional expectation