Save

I’ve never been one to use a lot of inspirational tools, like decks of design method cards. Day to day, I figure I have a very solid understanding of core practices and can make others up if I need to. But I’ve also been the leader of a fast-paced team that has been asked to solve all kinds of difficult problems through research and design, so sticking to my personal top five techniques was never an option. After all, only the most basic real-world research goals can be attained without combining and evolving methods.

So I was quite intrigued when I received a copy of Bella Martin and Bruce Hanington’s Universal Methods of Design, which presents summaries of 100 different research and analysis methods as two-page spreads in a nice, large-format hardback. Could this be the ideal reference for a busy research team with a lot of chewy problems to solve?

In short: yes. It functions as a great reference when we hear of a method none of us is familiar with, but more importantly it’s an excellent “unsticker” when we run into a challenge in the design or analysis of a study. I have a few quibbles with organization that I’ll get to in a minute, but in general this is a book that every research team should have on hand.

The structure of Universal Methods of Design is a simple alphabetical list. Each numbered technique has a thoroughly footnoted history and overview on the left page, paired with a set of visual examples on the right page. The book also categorizes methods by which design phase they’re appropriate for, and along five key axes:

- behavioral/attitudinal

- quantitative/qualitative

- innovative/adapted/traditional

- exploratory/generative/evaluative

- participatory/observational/self-reporting/expert review/design process

These two rubrics anchor the two facing pages of the method spreads (Design Phase on the right and the five axes on the left); methods are also coded by design phase in the Table of Contents.

I expected the book to offer a flood of information on methods that were new to me; in fact I was a little intimidated at first by the idea of exactly how many methods, out of a hundred, would be new, and how much I would need to learn. But there’s much more to it. When I read the book from cover to cover (something most readers probably won’t do, although at least a flip-through is a must), one of the biggest rewards was learning the history and seminal examples for research methods that I was familiar with in practice but hadn’t studied formally.

For example, I’ve been to my share of KJ affinity diagraming sessions (#49) but had never known that it was developed in the 60s as one of the practices of Total Quality Control, or even that KJ stood for Kawakita Jiro. While I didn’t need those pieces to use the technique effectively, understanding them gave me additional context and made me think. Bodystorming (#07) was another one – when a colleague convinced me to try it with a staid financial-industry client a few years ago, I wasn’t aware that a team at Interval Research had specifically designed it to bring the empathetic aspect of role-playing further forward into the actual creation phase of design—our effort to get those buttoned-down clients out of their chairs and into actively designing was a big success, and learning more about the technique’s evolution helped me understand why and where it could be applicable again.

I thought hard about Personas (#63) over my last couple years at Bolt Peters as we engaged in some critiques and evolution of the method, and I thought I knew that technique at a fairly deep level—but even there UMoD provided me with a useful surprise: a note referenced an alternate technique of designing for fictional, exaggerated characters a sub-method I had never heard of. I looked up the article (every topic spread is footnoted clearly) and found another intriguing way to push the boundaries of persona development.

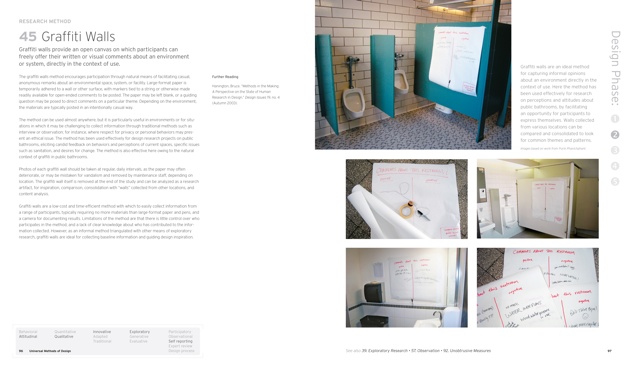

And the entries for methods that were new to me, while numerous, were much more fascinating than intimidating. The simple, low-cost technique called Graffiti Wall (#45) looks like an very useful option for certain kinds of guerilla studies for startups, a common and challenging requirement in my practice. The method spread has a brilliant photo of the technique in use in a pubic restroom, with important tips on how to execute it: what materials to use, how often to take photos to document the data collected, and how to manage and consolidate data from multiple walls. I will be putting this one into practice soon!

The topic spread on Cultural Probes (#24), an intensive ethnographic method that many of my clients could never fund, contains a wealth of well-laid out specifics for participant set-up in longitudinal remote research. This made me re-evaluate the experience of being a participant in one of our ordinarily less demanding studies—we do OK, but there are steps we could take to make participation smoother. I’ll be implementing those, too.

It’s true that a few of the methods, like Simulation Exercises (#77) where participants wear “age gain” suits to experience physical environments as an elderly person would, and Personal Inventories (#62), an open-ended technique where researchers collect and curate meaningful artifacts in concert with participants, will probably never become part of my core toolkit. But far from those being in the way, their presence made the book more rewarding, largely due to the authors’ cleverness in setting up the method spreads. The reader’s first exposure to a new method is not just concise, but highly salient—much of this is due to the fact that the authors convinced so many practitioners to share artifacts and photos, and annotated them so carefully. After five minutes with a method in UMoD, I could envision even very unfamiliar techniques in practice and consider how parts of them could be adapted to the projects I work on.

These wonderful new ideas are a great strength of the book, but I had no way to evaluate, for a complicated method like Simulation Exercises, whether the information provided was really enough to get a researcher started if she wanted to put it into practice. So I went back with a critical eye to the methods that are already part of my toolkit. For the methods I know best—Methods #05 (Automated Remote Research), #69 (Remote Moderated Research), and #88 (Time-Aware Research) cite my former boss, Nate Bolt’s, book and cover methods we used daily at Bolt Peters—I’ve trained many researchers and have a well-developed sense of what’s needed to get going. The method spreads for all three of the techniques above get the critical points right and explain them clearly; the authors have even provided a cartoon storyboard for the process of intercept recruiting in Time-Aware Research, and a good mathematical explanation of the live recruiting funnel for that method as well. I would feel confident recommending these summaries to someone who wanted to get started in the methods, and so by inference I believe that practitioners of different styles of research can rely on the authors’ judgment too.

I could go on. There are many useful and well-described methods I haven’t even mentioned yet, although that does bring up my one major concern about UMoD: the book, unfortunately, does not provide an index, which is a significant drawback. Several times, I wanted to go back and look for methods for a specific design phase or for evaluative methods on the Evaluative/Generative/Exploratory axis, but there was no way to do so efficiently. If you don’t know or at least recognize the name of the method you’re looking for, flipping through is the only affordance available.

The few quibbles I have besides the missing index relate to the inclusion of a few methods that seem overly broad—Interviews (#48) represents an awful lot of ground to cover in a page, and since there are also several sub-types of interviews discussed, it’s a little bit confusing. Observation (#57) suffers for similar reasons. But overall, the book is a great reminder of (and guide to) the wealth of methodological components one can draw on in designing a research project. It’s found a permanent place on my desk—except when one of my team members is borrowing it.

Cyd Harrell is UX Evangelist at Code for America, where she gets to spend all day helping create better experiences for citizens. She encourages like-minded UXers to apply for the 2014 CfA Fellowship. Before its acquisition in June 2012, she was VP of UX Research at Bolt Peters in San Francisco.